In this guide we’ll explore how you can enhance your LLM’s performance and teach it to mimic your style. We’re diving into the world of few-shot prompting with large language models (LLMs), sharing a technique that allows LLMs to mimic your style based on large datasets. Let’s get started!Documentation Index

Fetch the complete documentation index at: https://relevanceai.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Few-shot prompting basics

Few-shot prompting is a technique that feeds an LLM with some example data on how you want the response to be made. The LLM then generates a response that mimics the given examples. This technique can significantly enhance the performance of your LLM.A real-life example: sales response automation

Suppose you want to create a sales automation Tool that can respond to emails and LinkedIn messages automatically. You can use few-shot prompting to train your LLM to generate responses that align with your style and meet your specific rules and objectives. For instance, LinkedIn messages tend to be shorter and more specific.1. Create the build sales response automation Tool

To start, create a new tool. Add a long text input, to present the message to which we want to respond. Next, add an LLM component. Don’t forget to hit “Save” on the top right to save your Tool.2. Add examples to your LLM prompt

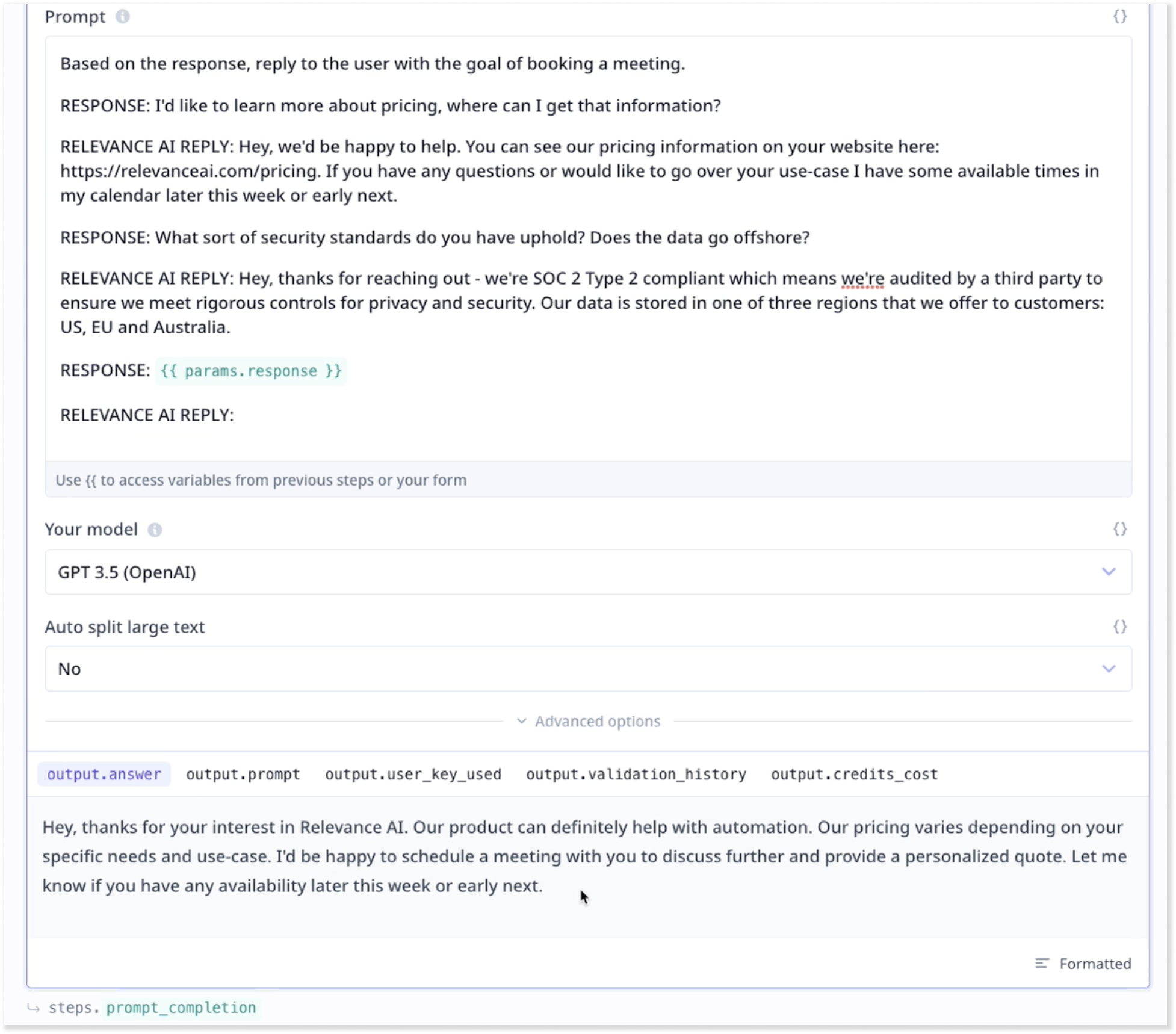

We aim to incorporate messages that some of our prospects have previously sent us, along with our responses to them. These will constitute the prospect-Relevance AI LinkedIn message pairs, serving as examples. The more examples we include, the better performance we can anticipate from the LLM. Enter a few cases of messages and your corresponding responses while using identifiers such as “Message and Response” or “Received and Reply”. In our example, we used “Response” and “Relevance AI reply”. The pattern shows the LLM that what the expected task is. At the end use{{}} to enter the response from the input component and then the respondent identifier. A sample is shown in the image below.

3. Use a training database

A few samples are beneficial in altering how the LLM produces messages. But what if we go a few steps further by providing a dataset of samples? Upload a CSV file containing samples of received messages and your corresponding replies. Make sure to enable knowledge. See our short guide on best practice to prepare my CSV data.4. Search in knowledge

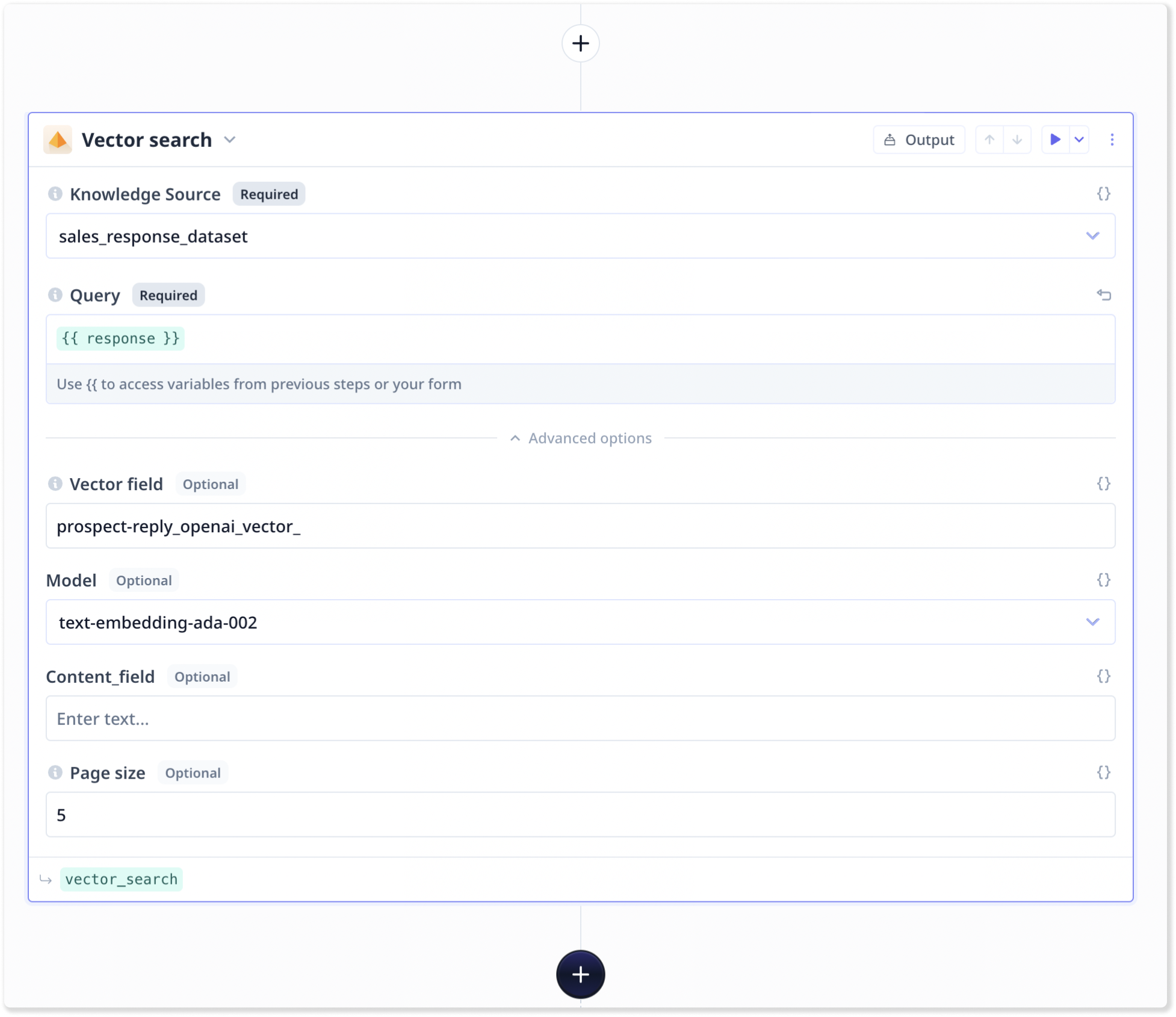

We want to create our own few-shot prompting technique based on an entire dataset. This can be achieved by using a search step to find the most similar past response(s) to the current input. So, we need to add a Knowledge search component to our Tool and configure the step:

- Select your dataset from the first drop-down

- Use

{{}}to set up the query to the input component (e.g.{{response}}) that we want the LLM to provide an answer to. - Specify the name of the vector field corresponding the incoming messages in your dataset.

- Increase the number of results to 10+ (e.g. 15, 30)

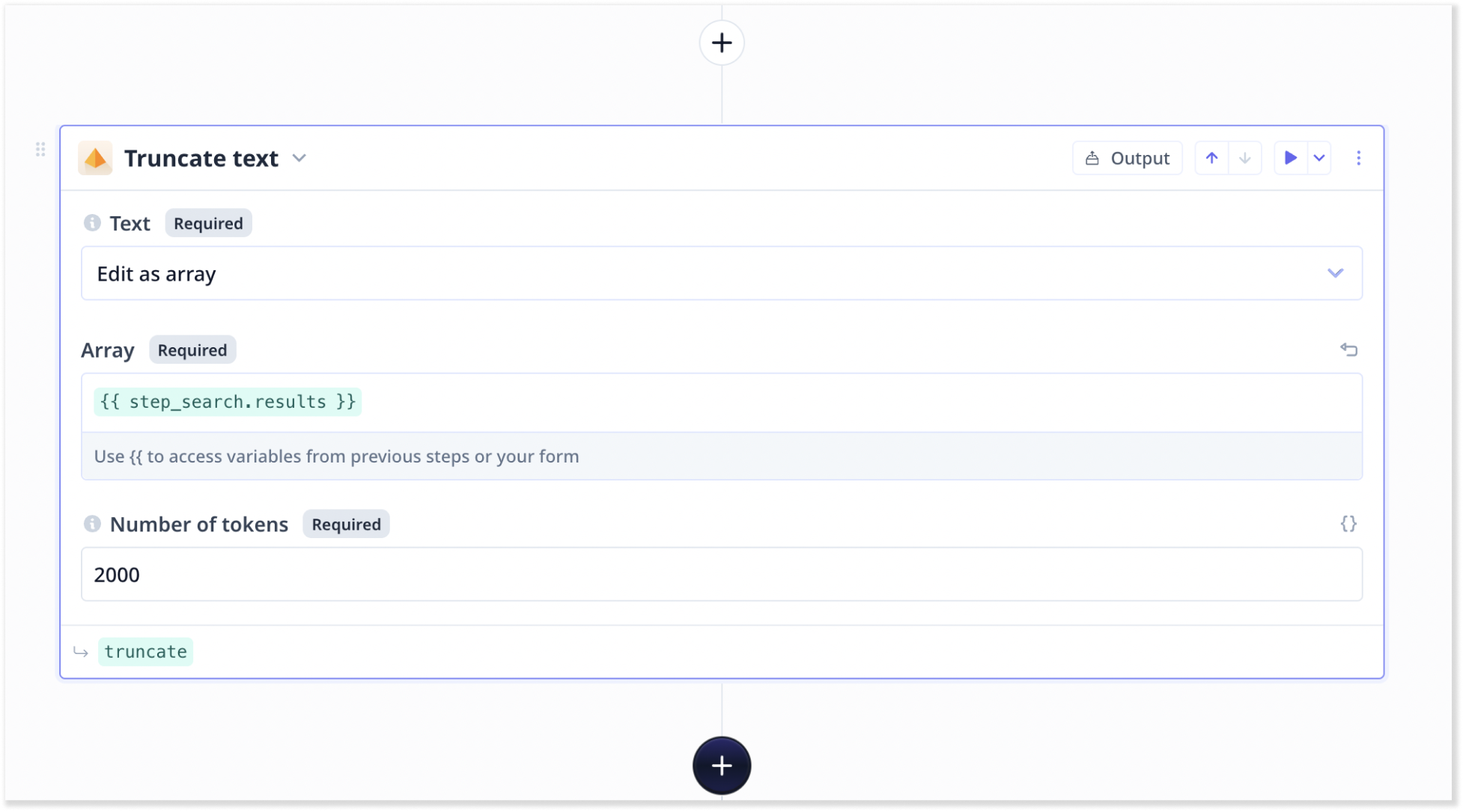

5. Add a ‘Truncate text’ step

We need to add a truncate text step to truncate the results. This allows us to specify an array, which is derived from the result of our previous search step, and limit the size of objects within the result to a certain number of tokens. Tokens can be roughly defined as words and LLMs have limitations on the number of tokens they can receive. In this example, we set it to 2000.

6. Feeding the truncated results into the LLM

Instead of entering examples manually, we can now include the output from the chunks of the previous ‘Truncate text’ step in our LLM. Access to step outputs is via{{}} and the name of the step. A sample is shown below:

Our final LLM prompt: