You can now track how your agents perform in real-world scenarios with comprehensive production monitoring! Just like software development has build → test → monitor stages, agent development now has the same complete lifecycle. After building your agents and testing them with Evals, you need visibility into production performance at scale.

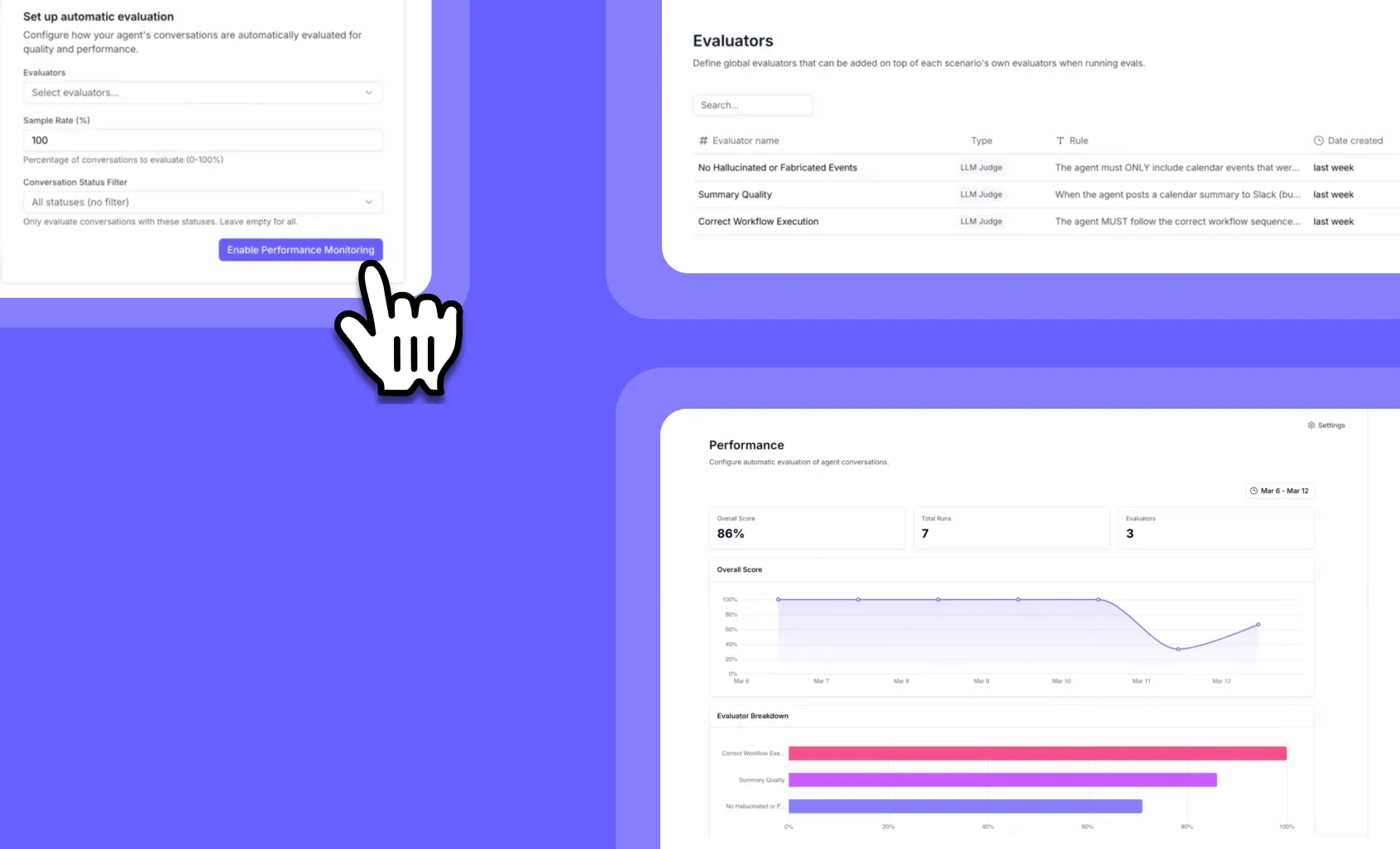

➡️ Automatic evaluation – Set up LLM judges to continuously assess your agents on custom criteria like summary quality, hallucination detection, and workflow execution

➡️ Performance dashboards – View overall scores, breakdowns by evaluator, and trends over time to spot issues before they become problems

➡️ Smart sampling – Run evaluations on 10%, 50%, or 100% of conversations to balance cost and coverage based on your volume

➡️ Detailed investigation – Filter and drill down into specific failed runs to understand exactly what went wrong and when

With Agent Performance Observability, you can catch performance degradations immediately—like when a model update changes behavior or agents start hallucinating—instead of discovering issues weeks later.

🔐 Permissions required: Available exclusively for select Enterprise customers. Evals delivers the advanced testing capabilities needed for mission-critical AI deployments. This feature is not available on non-Enterprise plans.

To access Agent Performance Observability, enterprise users can go to their agent’s dashboard and select the “Monitor” tab, then navigate to the “Test Suites” section. If you’re on an Enterprise plan and don’t yet have access, contact your Relevance AI sales representative to register interest.

Keep your AI workforce running at peak performance with enterprise-grade monitoring!